Stop Getting Hacked Through AI

Your code leaks every time your team uses AI. Samsung learned the hard way. Pretense hides it before it leaves the laptop

Deploy in 30 seconds

No credit card required

Work with all AI tools

Works with all AI tools

Your AI Tools Are Stealing Your Code

Real-time code protection

Your code gets hidden before AI sees it.

Automation built into your workflow

Works with the tools you already pay for.

Real-time insights and analytics

See every leak before it becomes a lawsuit.

Enterprise-grade access control

Lock down who sees what.

Everything You Need To Stop AI Leaks

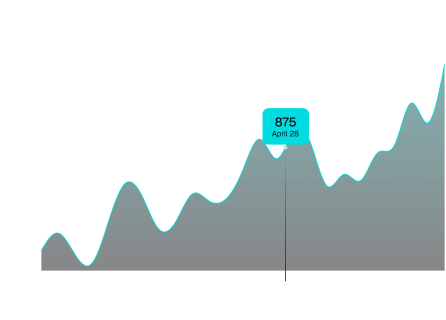

Track daily code protection activity trends

Visualize Code Protection trends over time and understand how AI interactions impact your codebase.

Real-time Code Protection trends and growth

Identify spikes and unusual activity

Filter by time range and usage patterns

10:55:10 | [SUCCESS] | Variable masked |

|---|---|---|

fronted-web by Emily Tran | src/users/service.py |

10:54:22 | [BLOCKED] | Database connection failed |

|---|---|---|

backend-service by John Doe | src/db/connect.py |

10:53:55 | [PROTECTED] | Rate limit exceeded |

|---|---|---|

fronted-web by Emily Tran | src/users/service.py |

10:52:46 | [SUCCESS] | Email sent successfully |

|---|---|---|

fronted-web by Emily Tran | src/users/service.py |

See activity as it happens in real time

Monitor live Code Protection across your system with instant updates and status visibility.

Real-time stream of Code Protection activity

Status indicators (Success, Blocked, Mutated)

Track user, repo, and file-level activity

Total Code Protection | |

|---|---|

1542 | vs Apr 10- Apr 18 |

Top user | |

|---|---|

Jason Lee | 420 Protects |

Most active repo | |

|---|---|

| 612 Protects |

Total Code Protection | |

|---|---|

Scan Proxy | 1120 (73%) 422 (27%) |

Get insights at a glance across your system

Understand key metrics, top contributors, and overall Code Protection activity in one place with better clarity.

Total Code Protection and growth trends over time

Top users and most active repositories overall

Action breakdown & usage analytics across teams

Protect your code in 3 simple steps

Install the CLI One command. macOS & Linux ready. | Done |

Create your organization Organization name |

Install and connect your workflow

Install the CLI and connect your tools in seconds—no setup required.

Ran on frontend-web 2 hrs ago | Protect |

Protection on API Service 5 hrs ago | Protect |

Granted New API Key 1 day ago | Protect |

Protected code before it reaches AI

Automatically replace sensitive identifiers before sending code to any LLM.

Repository | File Path | Status |

|---|---|---|

| src/users/service.py | Success |

| src/settings/config.py | Protected |

| src/orders/controller.py | Success |

| src/profile/view.py | Blocked |

| src/files/upload.py | Protected |

Track activity across your team

Monitor Code Protection, scans, and usage with full real-time visibility across your entire system.

Trusted protection for modern AI workflows

Feature | Pretense | Trasitional DLP | No Protection |

|---|---|---|---|

Code Protection (preserves AI context) | |||

Deploys in 30 seconds | - | ||

Works offline (local-first) | |||

Multi-provider (Claude + GPT + Gemini) | - | ||

SOC2/HIPAA audit trail | |||

Starting at $0/month |

Choose the plan that fits your workflow

Free

Perfect for getting started with mutation testing

$0 /month

Get Started Free

1,000 mutations 7 days

Up to 3 seats

7-days log retention

Unlimited mutations

25+ seats

SOC 2 exports

Priority support

Pro

Build for growing teams that need more power and flexibility

$29 /seat/month

Upgrade to Pro

Unlimited mutations

25+ seats

SOC 2 exports

30-day log retention

Priority email support

Advance insights

Dedicated support & CSM

Enterprise

For large organizations with advance security needs.

Custom Pricing

Contact Sales

Everything in Pro

Custom seat provisioning

Dedicated support & CSM

SLA & uptime guarantee

SOC2 compliance (Coming Soon)

HIPAA support (Coming Soon)

SAML / SSO (Coming Soon)

No data leaves your system

Built for secure workflows

Works with your existing tools

Cancel anytime seamlessly

Trusted for Security

“Setup took less than a minute. We started seeing Code Protection activity immediately across the team.”

Sarah Lewis

Backend Developer

“We were worried about sending sensitive code to LLMs. Pretense solved that instantly without changing how we work.”

Alex Chen

Senior Engineer, Devcore

“Pretense gave us visibility and control over how AI tools interact with our code. That's something we didn't have before.”

Sarah Kim

Security Lead, Byteshield

“Setup took less than a minute. We started seeing Code Protection activity immediately across the team.”

Jason Lee

CTO, Buildflow

“We stopped worrying about sending proprietary code to AI tools. Pretense made that a non-issue.”

Rahul Mehta

Security Engineer, Finstack

“I haven't seen anything else that protects code before it reaches AI. This is a completely new layer of security.”

Daniel Park

Platform Engineer, Infralabs

Got questions? We've got answers.

Here's everything you need to know before getting started.

Pretense acts as a firewall between your code and AI tools. It mutates sensitive identifiers before sending code to any LLM, ensuring your real data never leaves your system.

fronted-web

fronted-web